How SFIA supports AI assurance and governance

Artificial intelligence systems introduces new risks, new accountabilities and new patterns of responsibility across organisations.

AI governance and assurance are not single roles. They are a coordinated set of responsibilities spanning engineering, operational, governance, change and independent oversight functions.

SFIA provides a structured, role-based way to describe and align these responsibilities clearly and consistently.

Moving beyond job titles

Many organisations are creating new AI-related job titles:

- AI governance lead

- Responsible AI specialist

- AI auditor

- AI assurance manager

- MLOps engineer

Titles alone do not define accountability.

SFIA separates capability into two clear dimensions:

-

Professional skills — what a role is responsible for doing

-

Levels of responsibility — the degree of autonomy, influence and accountability applied

This allows AI governance and assurance to be described precisely without creating entirely new frameworks.

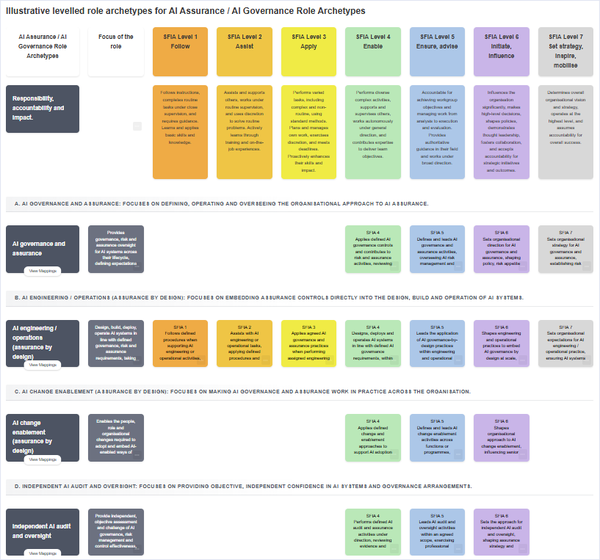

Four complementary role archetypes

The interactive grid illustrates four AI-related archetypes commonly seen in organisations.

1. AI engineering / operations (assurance by design)

- Designs, builds and operates AI-enabled systems responsibly.

- Governance literacy is required — but governance ownership is not.

- Responsible for delivery and operational integrity.

2. AI governance and assurance (second line)

- Defines control objectives, policy requirements and risk frameworks.

- Evaluates whether AI systems meet organisational and regulatory expectations.

- Responsible for governance and risk decisions — not system implementation.

3. AI change enablement

Enable and embed the organisational, role and capability changes required to adopt AI-enabled ways of working responsibly.

This role:

- analyses how AI affects jobs, skills and ways of working

- designs and delivers role-relevant learning to support assurance by design

- enables sustainable adoption without owning AI delivery or governance

- Responsible for adoption and capability change — not engineering or assurance.

4. Independent AI audit and oversight (third line)

- Provides independent evaluation of governance effectiveness and control adequacy.

- Responsible for assurance opinion and independent challenge — not control design or operational remediation.

Indicative SFIA skills and AI literacy/knowledge requirements

AI governance and assurance |

AI engineering / operations (assurance by design) |

AI change enablement (assurance by design) |

Independent AI audit and oversight |

|

Purpose: define enforceable control expectations and evaluate risk realistically without owning engineering. |

Purpose: design and operate AI systems responsibly within defined governance boundaries. |

Purpose: enable and embed the organisational, role and capability changes required to adopt AI-enabled ways of working responsibly. |

Purpose: independently validate the adequacy, effectiveness and evidentiary basis of AI governance, risk and control claims, without participating in control design or remediation. |

SFIA Skills |

SFIA SkillsDependent on specific role e.g. design v development v operations

|

SFIA Skills |

SFIA Skills |

|

Illustrative Technical Literacy / knowledge required

|

Illustrative Governance / assurance literacy required:

|

Illustrative Technical Literacy / knowledge required

|

Illustrative Technical literacy / knowledge required

|

Why levels of responsibility matter

AI capability is not only about skills — it is about accountability and impact.

SFIA’s seven levels describe increasing responsibility:

- from following and assisting

- to applying and enabling

- to ensuring, advising and influencing

- to setting strategy and mobilising organisations

In AI contexts this becomes critical.

For example:

- A Level 4 engineer may implement model monitoring controls.

- A Level 5 governance lead may ensure those controls are mandated and embedded.

- A Level 6 executive may initiate enterprise-wide AI risk policy.

- A Level 7 board-level role sets organisational AI risk appetite.

The skill may be similar. The accountability is not.

This prevents:

- role overlap

- governance drift into engineering

- audit independence erosion

- level inflation

Skills and literacy: two dimensions of capability

Each archetype combines:

1. SFIA skills (tasks, activities, responsibilities)

These define the activities and responsibilities the role is accountable for performing — for example:

- risk management

- governance

- audit

- machine learning

- programming

- organisational change

2. Adjacent literacy (knowledge)

These describe what the role must understand in order to apply its skills effectively.

For example:

- governance roles need technical literacy in AI architectures and lifecycle risks

- engineering roles need governance literacy regarding regulatory expectations

- auditors require deeper technical interrogation capability without operational ownership

Skills define who owns what.

Literacy supports credible cross-functional working.

Assurance by design vs governance vs independent assurance

AI assurance occurs at different layers:

- Assurance by design — controls embedded during engineering and change

- Governance and oversight — definition of expectations and evaluation of risk

- Independent audit — objective assessment of adequacy and effectiveness

SFIA allows these to be described as distinct but coordinated roles, rather than conflating them under a single “AI governance” label.

This separation protects:

- independence

- clarity of accountability

- regulatory defensibility

- professional integrity

Why use role archetypes?

Role archetypes are not job descriptions and they are not organisational mandates. They are structured patterns of responsibility that clarify how different types of accountability fit together.

In AI assurance and governance, these archetypes are deliberately distinct. Separation between these roles is not optional.

- engineering and operations

- governance and risk oversight

- change enablement

- independent audit

It protects independence, prevents self-assurance and supports regulatory defensibility.

A levelled archetype does not imply that every organisation needs all archetypes operating at all levels of responsibility. It shows how responsibilities can scale — from operational application to strategic direction — where required.

The archetypes provide a disciplined reference model. Organisations determine the appropriate scale and distribution, while keeping accountability boundaries clear.

A practical workforce planning tool

Using SFIA for AI assurance and governance helps organisations:

- define clear ownership boundaries across lines of defence

- avoid duplication or role drift between delivery, governance and oversight

- scale capability proportionately to organisational size and AI risk exposure

- identify development pathways and progression aligned to levels of responsibility

- integrate AI-related responsibilities into existing enterprise roles rather than creating unnecessary new silos

This supports coherent role design while respecting necessary separation of duties.

A contemporary capability issue

AI governance is not purely a policy challenge and not purely a technical one. It is a workforce capability design problem:

- who is accountable

- at what level of responsibility

- for which activities

- with what degree of authority and influence

SFIA provides a neutral, internationally recognised structure to answer those questions consistently.

The interactive grid illustrates how:

- different archetypes align to different SFIA skills

- those skills operate at different levels of responsibility

- responsibilities scale from operational to strategic

- accountability can be distinguished clearly without inventing new frameworks

Why this matters now

Regulators increasingly expect organisations to demonstrate:

- clear accountability

- defined control ownership

- independent oversight

- sustainable operational embedding

SFIA does not prescribe how AI should be governed.

It provides a structured way to describe who is responsible for what — at the appropriate level of authority and impact.

For organisations addressing AI assurance and governance, that clarity can be a valuable starting point.